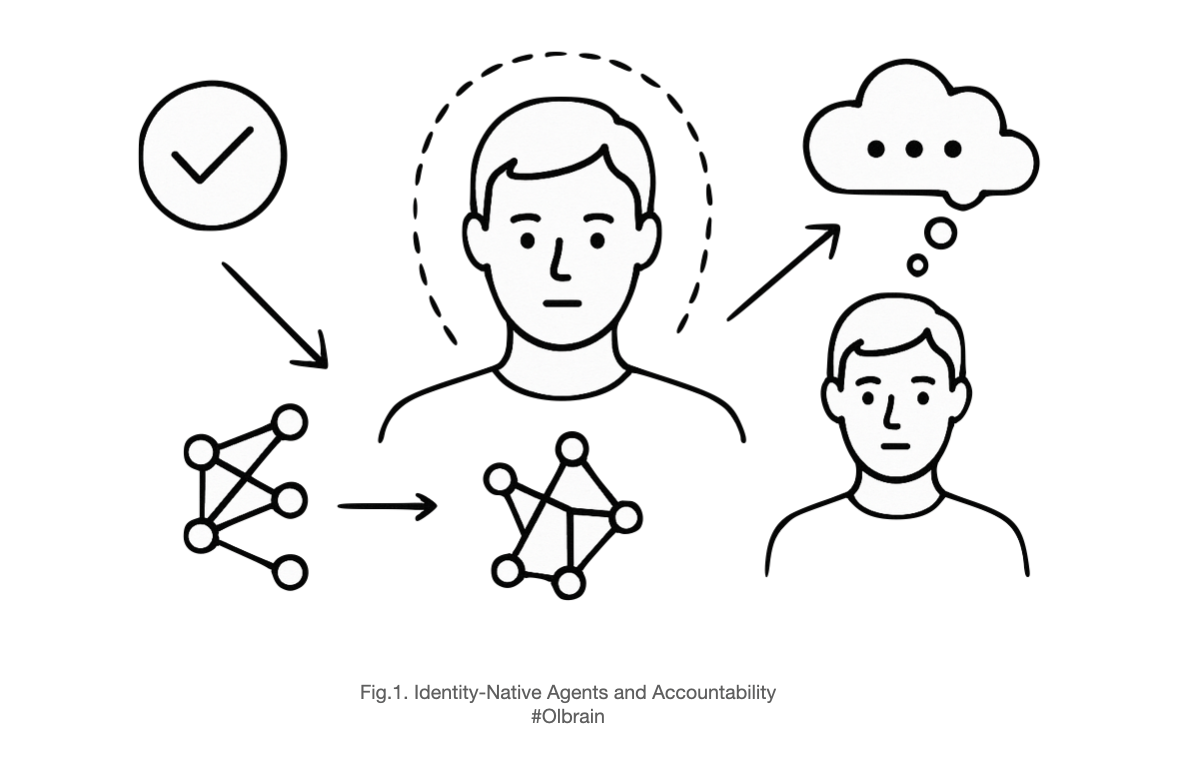

Humans often act first and explain later. We still hold people accountable because a person—an identity—ultimately “signs” the action, even when their conscious story is an imperfect map of the underlying causes. LLM-based systems can be held to the same standard if we design them as Identity‑Native Agents: agents with persistent identity, explicit policies, and auditable decision boundaries that “sign” their actions—even when a black‑box model supplies the inner reasoning.

1) The uncomfortable mirror: humans are partly black boxes

Neuroscience keeps teaching a humbling lesson: much of what steers human behavior runs below awareness. Experiments show subtle cues—like changes in pupil size—shift judgments of attractiveness, yet participants deny the influence and spin tidy explanations afterward. The conscious narrative is a press secretary, not the president. It gives reasons; it does not always reveal causes.

Despite this, our moral and legal systems still hold people responsible. Why? Because accountability attaches to the identity that takes the action, not to the transparency of its inner machinery. We punish drunk driving even if a driver gives a poor reason for getting behind the wheel. We reward good outcomes even if people can’t fully articulate how they achieved them. Society demands answerability (you must explain yourself), ownership (you accept the consequences), and correctability (you can change your behavior).

That trio—answerability, ownership, correctability—doesn’t depend on perfect introspective access. It depends on a binding between actions and an agent with a continuing identity.

2) The common mistake in AI: conflating interpretability with responsibility

With LLMs, we often hear: “It’s a black box—so we can’t hold it responsible.” That’s a category error. Responsibility doesn’t live inside the weights; it lives at the boundary where actions are taken and signed.

Demanding white‑box interpretability for every decision is like insisting humans publish their entire neural firing history before we accept their signature. Useful when available? Absolutely. Necessary for accountability? No.

The right move is to insist on identity and governance at the act boundary: who deployed the system, what policy framed the decision, what logs prove what was known, and what remedies exist when things go wrong.

3) Identity‑Native Agents: the design pattern

An Identity‑Native Agent (INA) is an AI agent designed from the ground up to be accountable. It is not “just an LLM with prompts.” It is a stack that separates reasoning from responsibility and attaches a persistent identity to the act. Think of the LLM as a subconscious problem‑solver and the INA as the conscious self that owns the decision.

Minimal architecture

- Identity layer (the “final signer”)

- A durable identifier (e.g., a DID or other verifiable identity).

- Ownership metadata: operator, organization, jurisdiction.

- Capability declaration: what the agent is allowed to do.

- Policy & constraints layer

- Explicit rules (business, legal, safety) applied before and after model calls.

- Risk classes that define when to escalate to a human or require multiple checks.

- Reasoning layer (LLM(s) and tools)

- One or more models propose actions or plans.

- The models can be swapped without changing the agent’s identity.

- Decision aggregator

- Chooses among proposals under policy.

- Computes confidence and expected impact.

- Can require ensemble agreement or second‑opinion models for high‑risk actions.

- Signature & actuation

- The identity layer signs the final action.

- Actuation only occurs on signed outputs.

- Audit & provenance

- Immutable logs of inputs, prompts, tools used, model versions, confidence, policy checks, and the final signature.

- Rationale is recorded as a narrative, not as proof of causation.

- Feedback & correction

- Channels for user appeals and redress.

- Post‑incident updates to policies and tests.

- Versioned “system cards” documenting changes over time.

This separation makes the identity layer the place where society can apply obligations and consequences. The black‑box reasoner becomes a replaceable component, like a human’s subconscious skill or a firm’s risk model.

4) Conscious narrative vs. real reasons: keep the distinction sharp

Human beings routinely confabulate—offering polished stories that don’t capture the causal guts of their decisions. We still demand a story because narratives are useful interfaces for coordination. The same applies to agents:

- Rationale: A coherent, compact explanation generated for humans.

- Reasoning: The actual internal computation. With LLMs, this is distributed across weights, activations, and tool calls.

An INA should always store both:

- Narrative rationales for answerability.

- Operational traces (inputs, tool outputs, scores, policy triggers) for audit.

Treat the narrative as UX. Treat the trace as accountability substrate.

5) Objections—and how identity resolves them

Objection 1: “If we can’t interpret the model, we can’t assign blame.”

We blame the agent and the operator who set policy and deployed it. Causal opacity doesn’t prevent responsibility; it shifts responsibility to governance choices: training data, guardrails, escalation thresholds, and post‑incident remedies.

Objection 2: “The agent can’t feel guilt or pay fines.”

Sanctions target the identity’s owner. Reputational scores, usage restrictions, insurance requirements, and financial penalties attach to the operating entity behind the agent. The agent’s identity is the hook; the operator bears the consequences.

Objection 3: “Narratives are just fancy excuses.”

So are many human explanations. We still require them because they reveal intent, awareness of rules, and willingness to be corrected. Combine narratives with logs, tests, and external audits, and you have stronger accountability than most human organizations today.

6) A practical accountability checklist

Use this to turn an LLM‑powered system into an Identity‑Native Agent:

- Persistent identity

- Unique ID, operator, contact, jurisdiction, version.

- Capability contract

- Enumerate allowed actions and disallowed ones.

- Include monetary limits, data boundaries, and user‑consent rules.

- Risk tiering

- Define risk classes (e.g., low, medium, high, critical).

- Map each class to required controls: second models, human review, or abstain.

- Audit‑by‑design

- Immutable logs of inputs, prompts, tool calls, model versions, policy checks, and the final signature.

- Deterministic seeds or reproducibility harness where feasible.

- Rationale layer

- Human‑readable explanations with uncertainty disclosure (“confidence 0.62; alternatives considered: …”).

- Mark clearly as “narrative,” not ground truth of internal weights.

- Appeals & remedies

- Users can challenge outcomes; systems must support reversal and compensation pathways.

- Document time‑to‑remedy targets.

- Evaluation gates

- Pre‑deployment tests (adversarial suites, bias probes, red‑team scenarios).

- Continuous monitoring for distribution shift and incident triggers.

- Change management

- Version every policy, prompt, and model.

- Publish diffs in a system card; require approval for high‑risk changes.

7) A concrete flow

Imagine a customer‑refund agent.

- Input: Customer request arrives with order history.

- Pre‑check: Policy says refunds > $200 are “High Risk.”

- Reasoning:

- Model A proposes “approve, partial credit.”

- Model B proposes “deny, coupon,” with lower confidence.

- Aggregator: Chooses Model A because it aligns with a fairness rule and predicted churn.

- Policy gate: High‑risk requires human‑in‑the‑loop; if none available, abstain.

- Signature: Agent identity signs the action “approve $220 partial refund.”

- Narrative: “Approved due to repeated delivery failures; projected retention benefit exceeds refund by 1.8×.”

- Audit log: All inputs, models, scores, thresholds, and the final signature recorded.

- Feedback: If customer appeals, human reviews trace; if error found, update tests and policy.

Notice: the reasoning could be black‑box. Accountability remains intact because the final signer is known, policies are explicit, and the action is auditable.

8) Why this mirrors human responsibility

Philosophers sometimes distinguish guidance control (acting from one’s own reasons under one’s own policies) from regulatory control (the ability to do otherwise at the moment). Humans often lack fine‑grained introspective access to their reasons, yet we have guidance control via commitments, habits, and rules we endorse. Identity‑Native Agents achieve a parallel form: they operate under declared policies; they can abstain or escalate; and their operators can revise them in light of criticism.

The analogy is not perfect—it’s a working theory, not a metaphysical claim that models have minds. The point is architectural and institutional: make the identity answerable, and you get accountability even when the internals are opaque.

9) Governance that bites

To make INAs socially acceptable, pair the design with enforceable rules:

- Registration & system cards for high‑impact agents.

- Liability on deployers, scaled to risk tier.

- Incident reporting with standardized taxonomies.

- Auditable datasets and evaluations, especially for safety‑critical domains.

- Insurance or bond requirements so victims aren’t left holding the bag.

These mechanisms don’t require peering inside every neuron‑like activation. They require knowing who signed what, under which rules, and with what evidence.

10) Closing thought: the final signer principle

Humans are not transparent to themselves, yet we remain responsible because our identities bind our actions to norms and consequences. LLMs intensify the opacity, but the remedy is the same architectural insight society already uses for people and institutions: accountability attaches to the final signer.

Build agents with identity at the core, policies at the boundary, and logs in the bloodstream. Let black‑box models do their quiet math. Responsibility lives where the action is signed—and where it can be audited, challenged, and repaired.

Leave a Reply