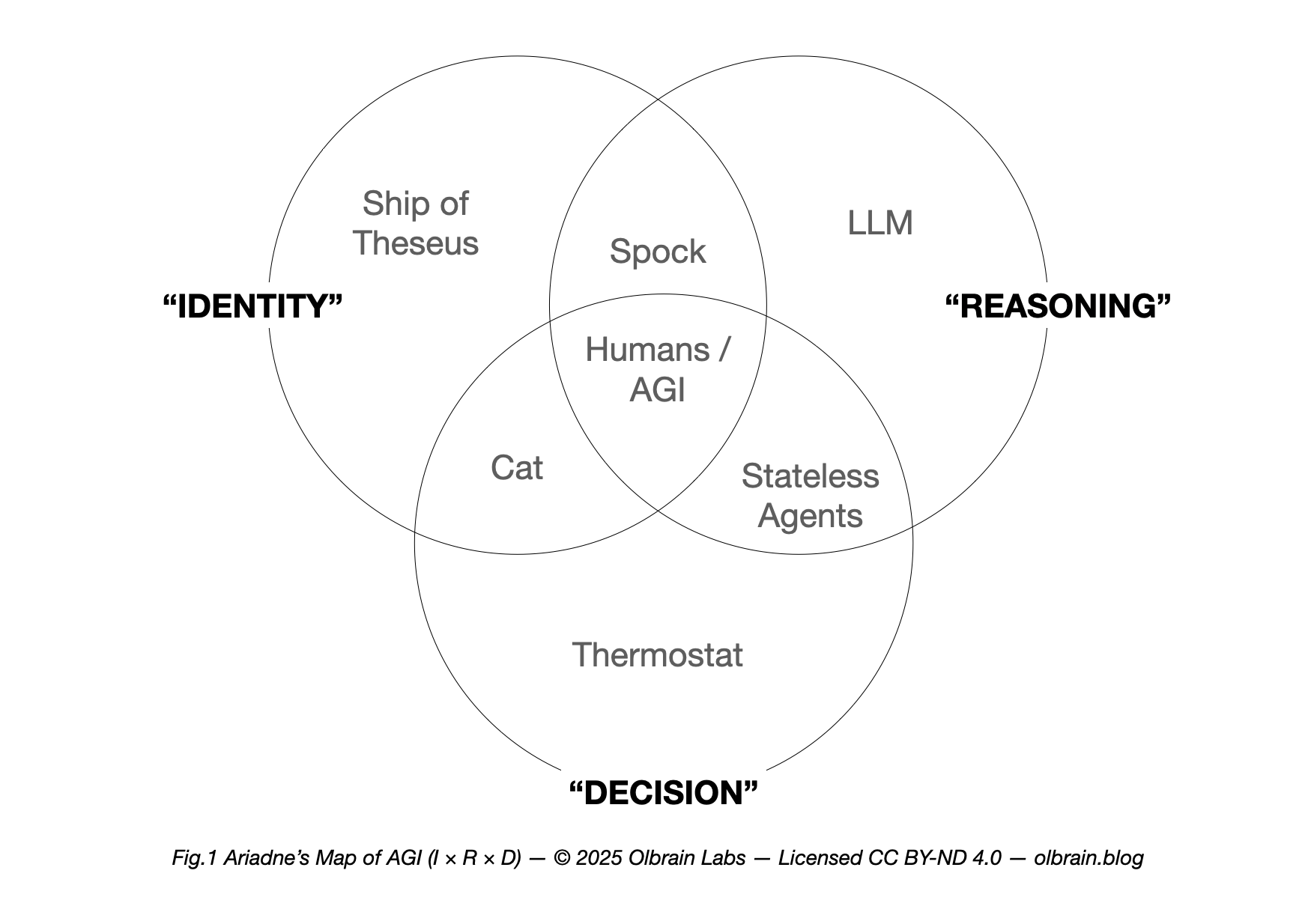

AGI lives where I (Identity Layer/CNE), R (Reasoning Layer/LLM), and D (Decision Layer/CoR) unites.

A guide to Identity Layer, Reasoning Layer, and Decision Layer — and where AGI really lives.

Prologue: The Thread and the Labyrinth

In the Greek myth, Ariadne hands Theseus a thread so he can navigate the labyrinth, slay the Minotaur, and find his way back. In AI, the labyrinth is the open-ended world of tasks; the thread is I — Identity (CNE: Coherent, Narrative, Exclusive) — a self‑model that holds an agent’s choices together across time. Without that thread, even brilliant systems get lost in their own capabilities.

This post introduces Ariadne’s Map of AGI: a simple Venn diagram that places ‘I’ along with R and D — into one picture — and shows exactly why AGI requires Identity.

The Map at a Glance

Ariadne’s Map has three circles:

- “I” (Identity Layer; CNE — Coherent, Narrative, Exclusive) — the self‑model, a diachronic “I” that can be held to account.

- “R” (Reasoning Layer; LLM — Large Language Models) — abstraction, modeling, and planning; builds and operates a world model.

- “D” (Decision Layer; CoF — Core Objective Function) — objectives and motivations (extrinsic tasks + intrinsic curiosity/value learning) that steer problem‑choice.

Where these circles overlap, different kinds of agents appear. The seven regions tell a story about today’s AI, animals, and the path to AGI.

Olbrain’s stand: We adopt the Continuum View — generality comes in degrees — but AGI requires Identity. Only systems with Decision Layer (D/CoF) + Reasoning Layer (R/LLM) + the Identity Layer (I/CNE), consulted by the control loop and reflected in mechanism‑grounded explanations, qualify as AGI. Stateless generalists remain non‑AGI.

The Seven Regions (with real-world anchors)

1) I only — continuity without cognition

Example: The Ship of Theseus thought experiment. The form and history persist through change, but there is no R or D.

2) R only — logic without ‘I’

Example: A stateless, pure LLM core answering logic riddles with no task-drive and no memory of self. It can reason in the moment, but there is no enduring ‘I’ and no objective that it owns.

3) D only — impulse without understanding

Example: A reflex arc or a thermostat. There is a target and behavior, but no model of the world and no identity.

4) I + R — a self that lives by reasons

Example: The Spock-like rational archetype — continuity of self anchored in reasons and principles, with instincts muted. Narrative and logic shape conduct.

5) I + D — a self with drives but limited abstraction

Example: Animals (a cat, a frog). There is continuity of self and drives/habits. Abstraction and symbolic reasoning are limited or local.

6) R + D (no I) — stateless generalists

Example: LLM/RL/multimodal pipelines that mix perception, planning, and tools to achieve goals — yet lack a persistent self-model consulted by control. They can produce stories about actions, but the stories are post‑hoc and not binding across time.

7) I + R + D — where AGI lives

Examples: Humans (paradigm case). Future AGI with a persistent self-model. Here the agent has an enduring ‘I’, can plan and explain, and selects problems under objectives and norms.

Why ‘I’ Is Non‑optional for AGI

David Deutsch’s bar for AGI — a system that can choose its own problems and tell its own story with good explanations— presupposes I. A story worth trusting is not a script; it’s a through‑line that binds reasons to actions and actions to consequences.

I is that through‑line. It:

- Carries commitments across time (ownership and accountability).

- Consolidates learning (what changed and why, as my update, not a reset).

- Grounds explanations in mechanism, so that narratives reflect how decisions were actually made.

Without I, R + D collapses into stateless cleverness: impressive output today, no accountable self tomorrow.

How to Build Toward Region 7

1) Establish a persistent self-model (I/CNE).

I isn’t a slogan; it’s a state that the control loop reads and writes. It encodes the agent’s enduring constraints and commitments.

2) Make explanations faithful (not decorative).

Add a Narrative Compiler that references the same internal variables used for control (planner rollouts, value signals, identity gates). Explanations must track mechanisms, not merely sound plausible.

3) Balance D with oversight.

The CoF should combine extrinsic tasks with intrinsic motivation and value learning (e.g., preferences). I regularizes how these trade off over time and under scrutiny.

4) Test for identity, not just capability.

Use Counterfactual Identity Probes (CIP), Story–Trace Concordance (STC), Diachronic Coherence Score (DCS), and Identity‑Conditioned Transfer (ICT). If nudging identity changes both policy and narrative as predicted — and if narratives match decision traces — you have more than a mask.

5) Practice identity hygiene.

Run identity checkpoints and rollbacks, and provide attestations that bind identity state to model provenance. Design for corrigibility: resolve errors without erasing the self.

Common Questions

Does AGI have to be human‑level?

No. In the Continuum View, sub‑human Narrative‑I AGI is possible: general, driven, identity‑bearing — just not yet at human breadth.

Are animals AGI?

No — animals (Region 5) have I + D but do not meet the explanatory, problem‑choosing bar in Deutsch’s sense.

Are today’s LLM agents AGI?

No — they sit in Region 6. They can act and explain, but the explanation is not tied to a persistent self that will stand by it tomorrow.

Leave a Reply